I've compiled a collection of formatted notes and presentations from courses I've taken and TA'd while at Stanford. This also include miscellaneos notes and Pluto.jl notebooks that may be useful for studying.

Table of Contents

Presentations

Safe planning under uncertainty using surrogate models

PhD Defense, Stanford CS PhD, 2025

Details primary research contributions of my PhD relating to safe planning and safety validation.

Mini-lecture on SignalTemporalLogic.jl

AA228V/CS238V: Validation of Safety-Critical Systems, Stanford University, 2025

Mini-lecture on using SignalTemporalLogic.jl for property specification of safety-critical systems.

Agents for Safety-Critical Applications

Stanford Intelligent Systems Laboratory (SISL), 2023

Explains how POMDPs are applied to solve safety-critical problems in aviation, autonomous navigation, and geological sustainability.

Bayesian Safety Validation for Black-Box Systems

AIAA AVIATION Forum, 2023

Efficiently estimate probability of failure for safety-critical systems, applied to a neural network runway detector for an autonomous aircraft.

Letters to a Young Scientist: Annotated Lessons

CS239/AA229: Advance Topics in Sequential Decision Making, Stanford University, 2020

Annotated lessons from Edward O. Wilson's Letters to a Young Scientist.

Learning Policies with External Memory

CS239/AA229: Advanced Topics in Sequential Decision Making, Stanford University, 2020

Simplified VAPS algorithm for online stigmergic policies, from Peshkin et al. ICML, 2001.

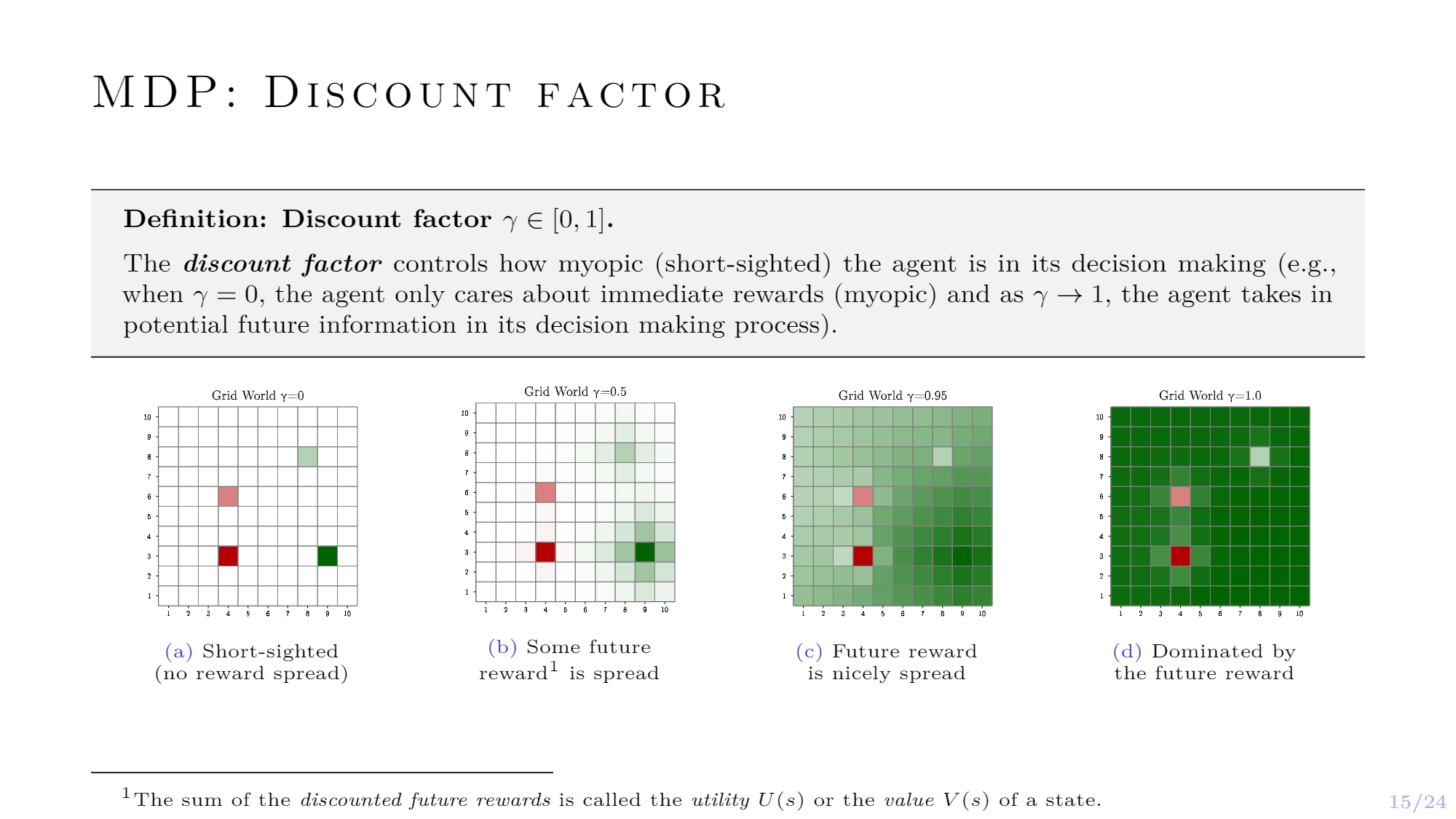

Markov Decision Processes (MDPs)

Decision Making Under Uncertainty using POMDPs.jl, Julia Academy, 2021

Definition and example of the Markov decision process (MDP) for a grid world problem, part of Decision Making Under Uncertainty using POMDPs.jl.

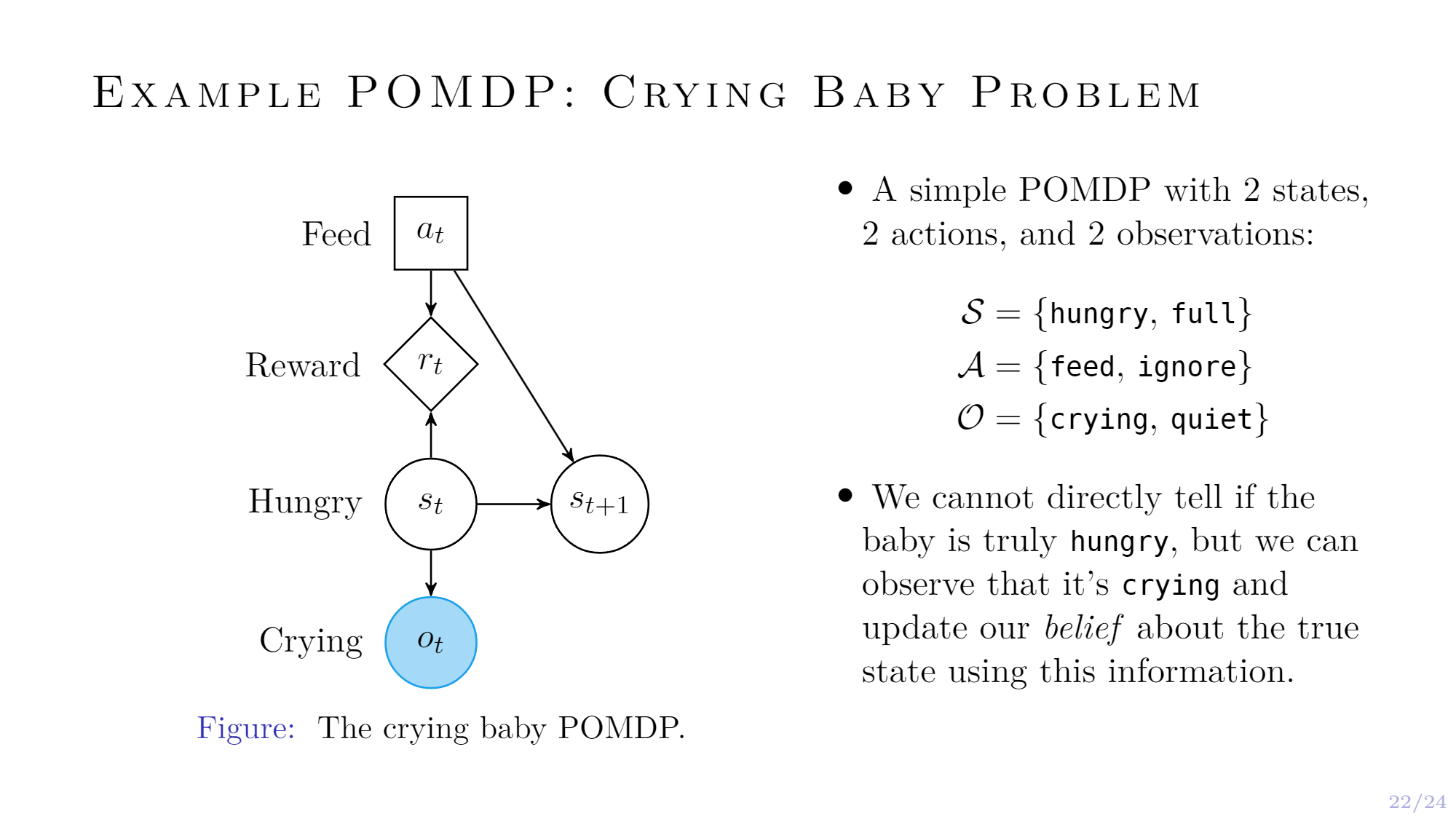

Partially Observable Markov Decision Processes (POMDPs)

Decision Making Under Uncertainty using POMDPs.jl, Julia Academy, 2021

Definition and example of the partially observable Markov decision process (POMDP) for the crying baby problem, part of Decision Making Under Uncertainty using POMDPs.jl.

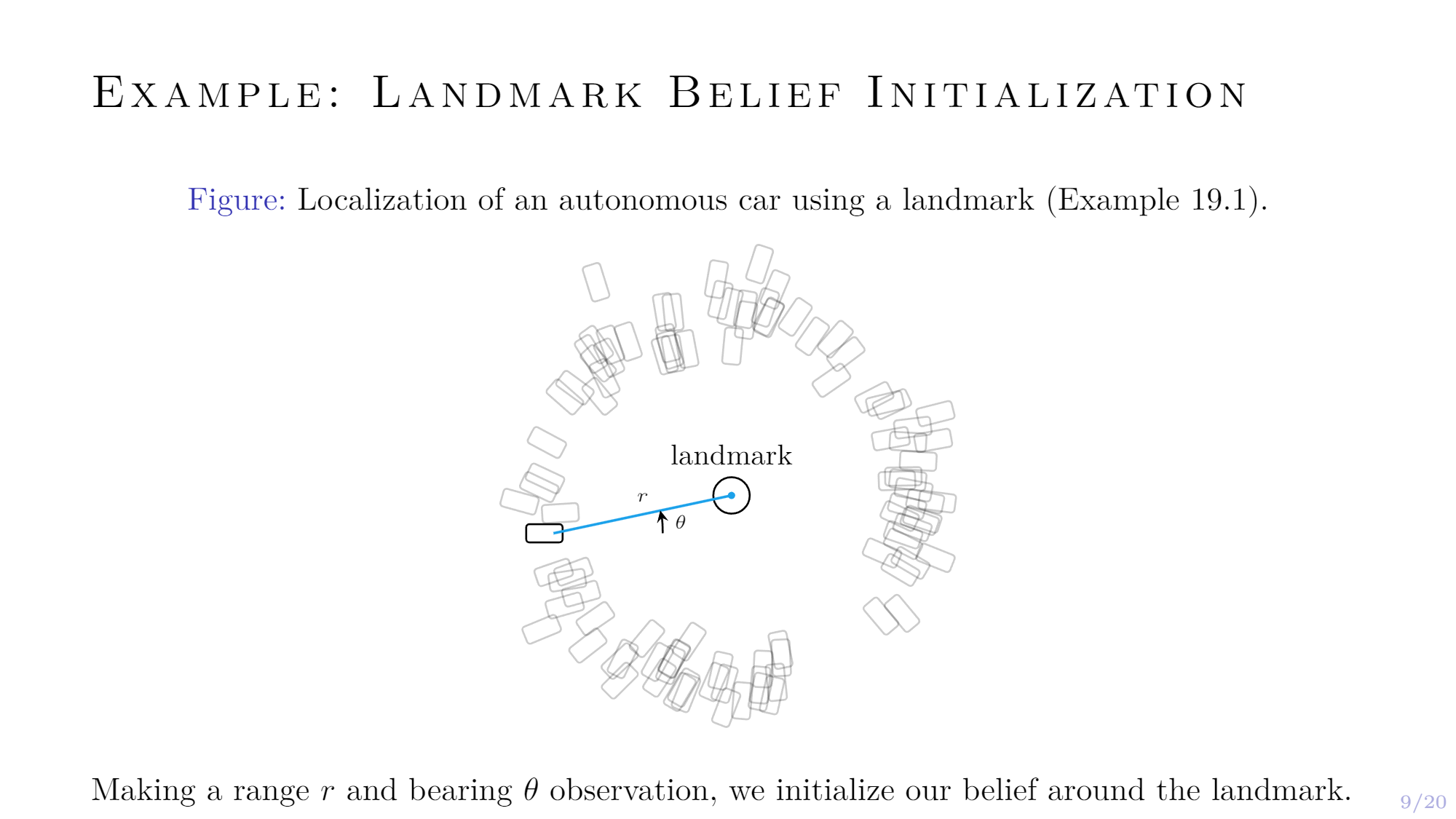

Beliefs: State Uncertainty

CS238/AA228: Decision Making Under Uncertainty, Stanford University, 2020

POMDPs, belief state representation, state uncertainty, particle filters, and Kalman filters.

Stanford Intelligent Systems Laboratory (SISL): An Overview

Stanford Center for Earth Resources Forecasting (SCERF), 2021

An overview of the research conducted at the Stanford Intelligent Systems Laboratory.

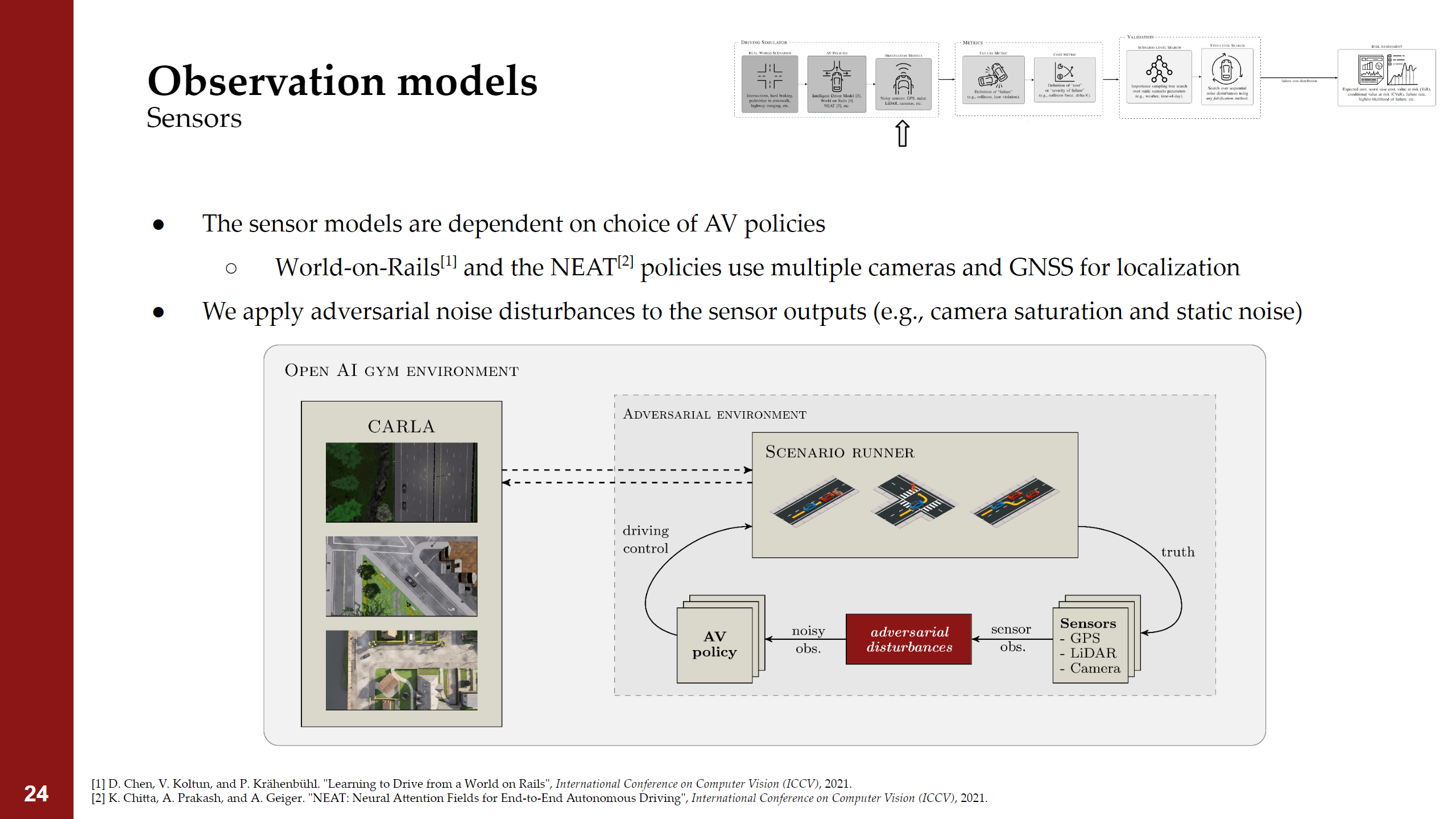

An Efficient Framework or Modular Autonomous Vehicle Risk Assessment (MAVRA)

IEEE International Conference on Intelligent Transportation Systems (ITSC), 2022

A framework for wfficiently estimating risk of autonomous vehicle policies in high-fidelity simulators.

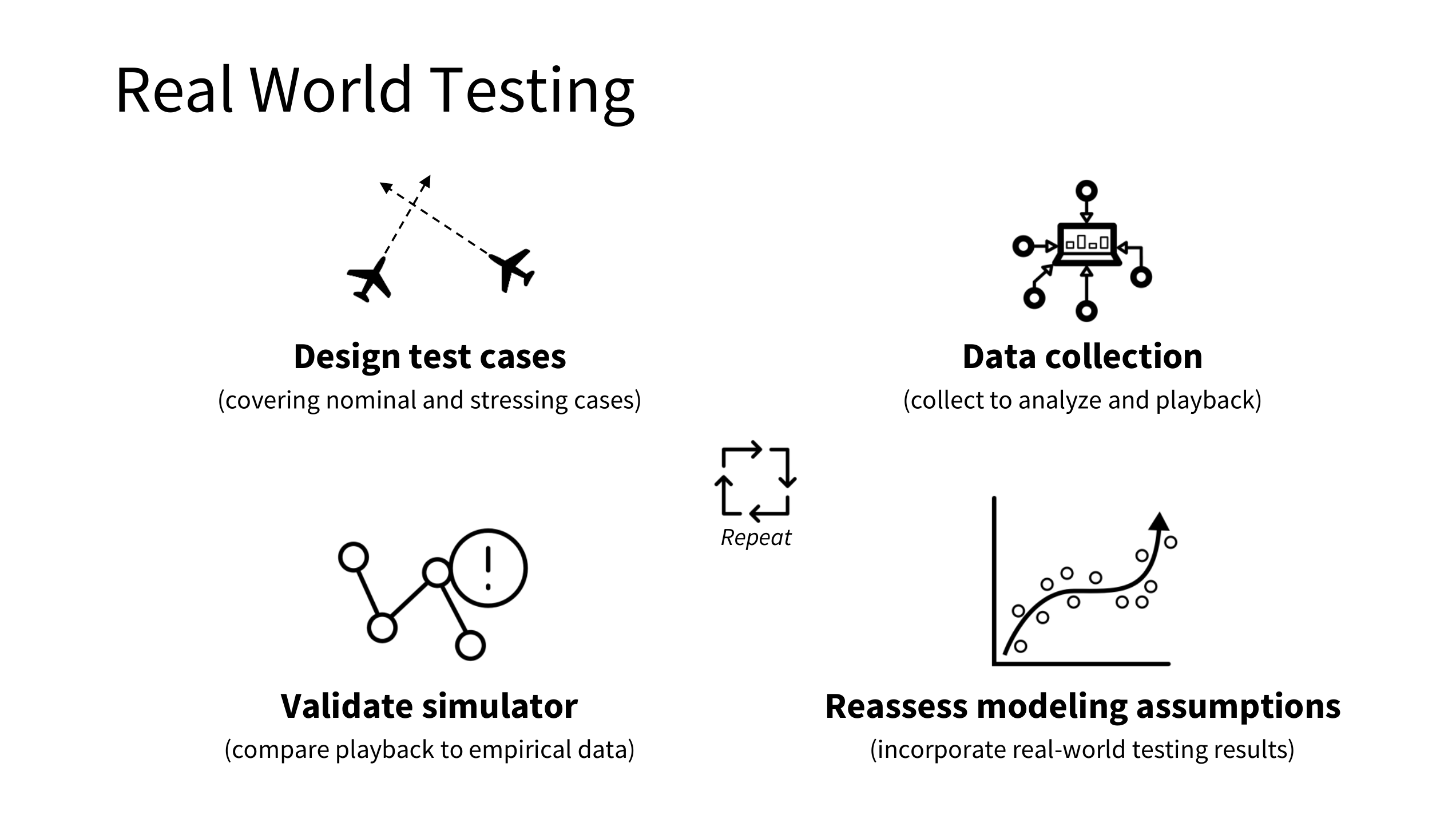

Transfering Aviation Safety Lessons to the Road

The National Academies of Sciences, Engineering, and Medicine, 2021

How lessons from the safety validation of aviation software can be transfered to autonomous driving.

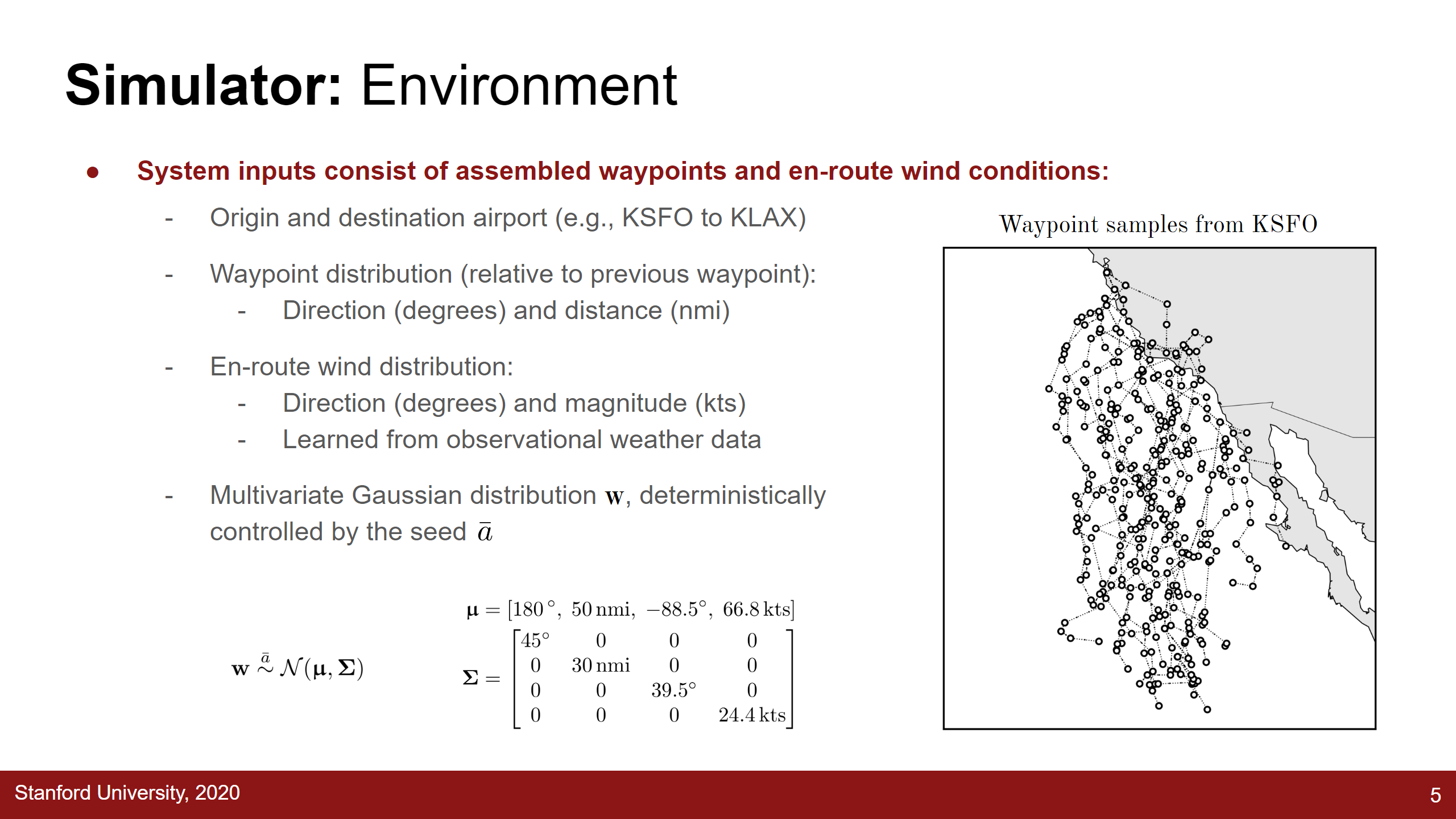

Adaptive Stress Testing of Trajectory Predictions in Flight Management Systems

IEEE/AIAA Digital Avionics Systems Conference, 2020

Black-box stress testing of an open-looped system with episodic reward.

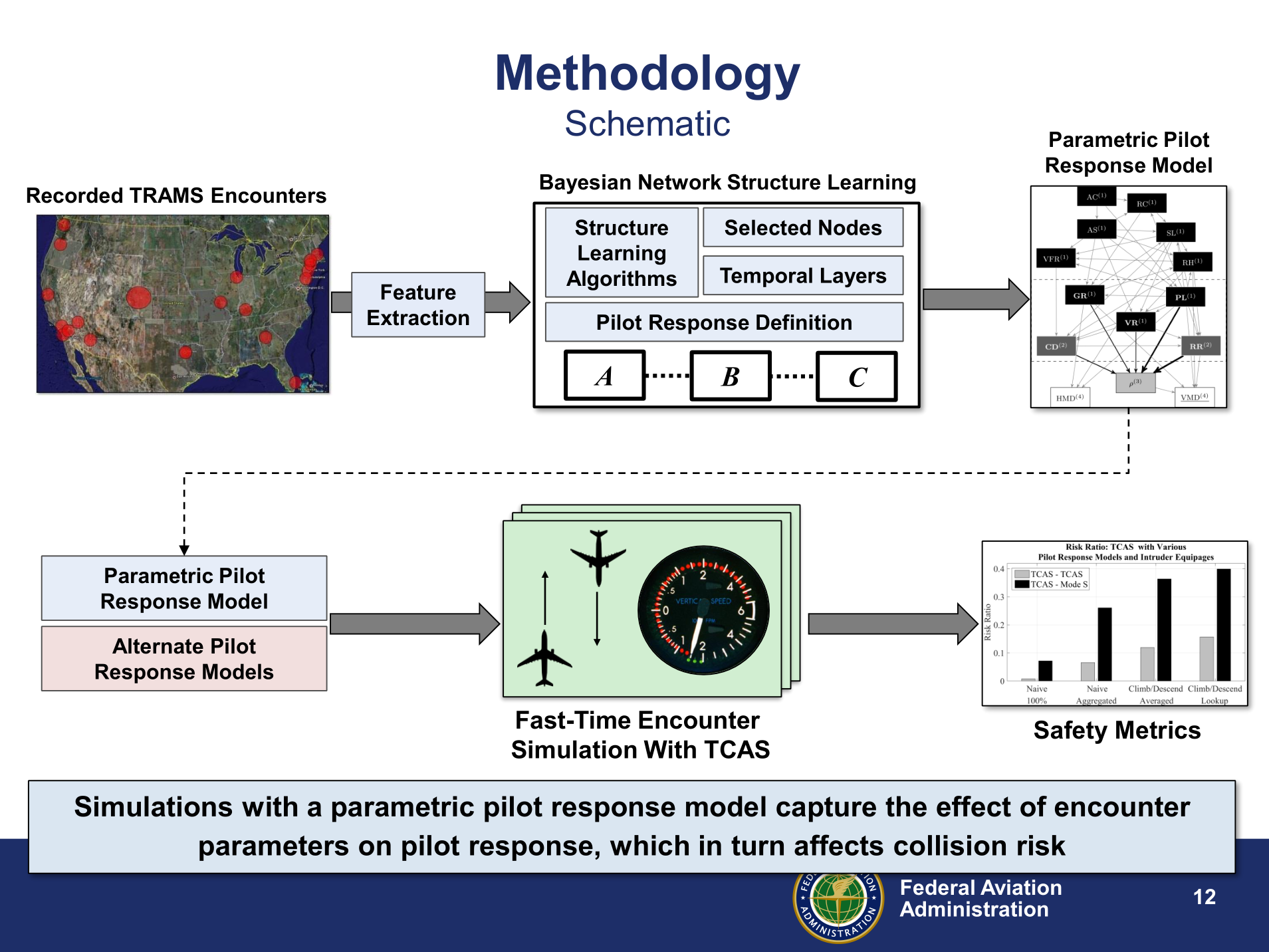

A Bayesian Network Model of Pilot Response to TCAS RAs

Air Traffic Management Research and Development Seminar (ATM R&D Seminar), 2017

Collecting radar data to learn a Bayesian network pilot response model to aircraft collision avoidance advisories.

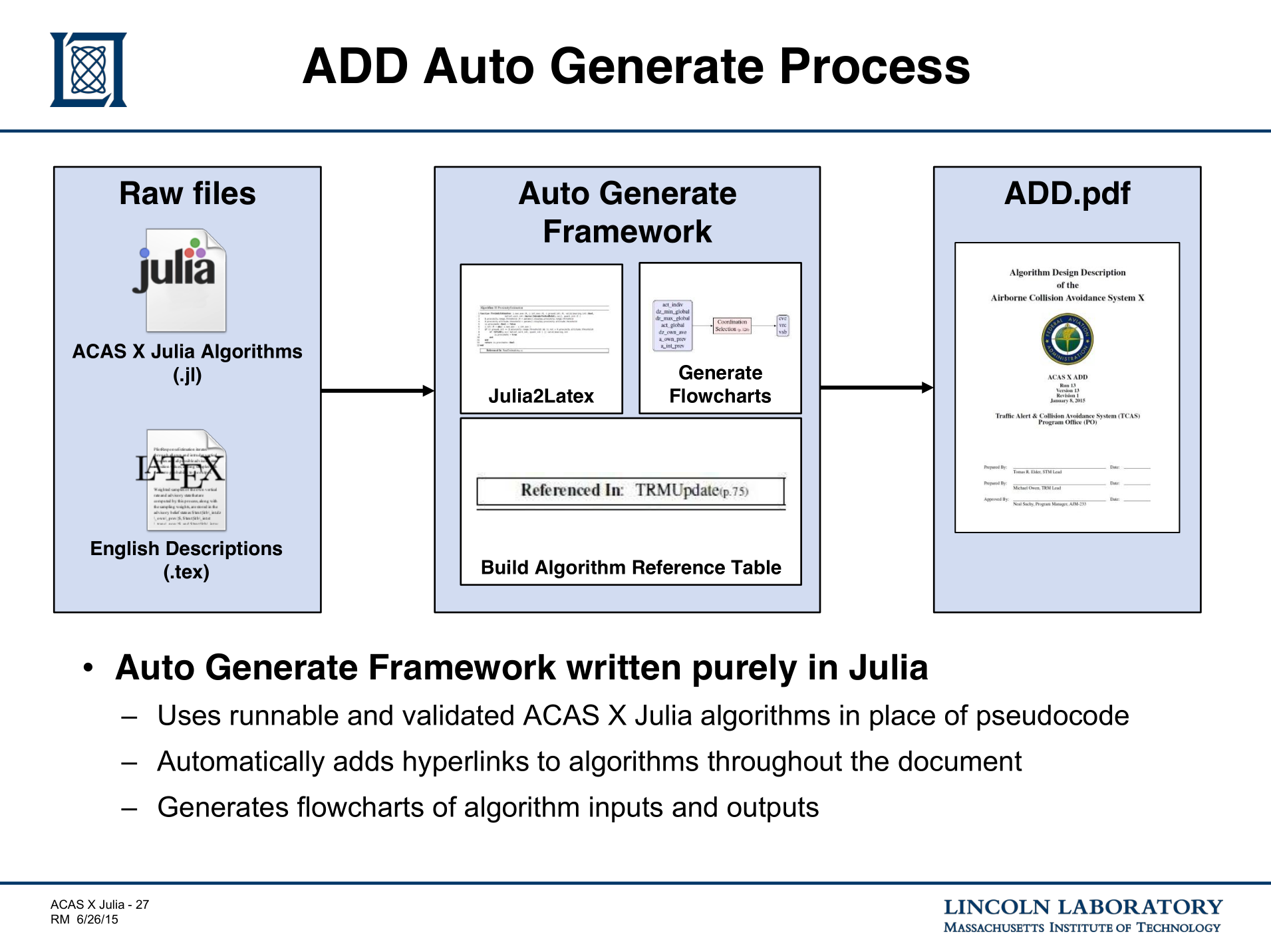

Using Julia as a Specification Language for Aircraft Collision Avoidance Systems (ACAS X)

JuliaCon, 2015

How the FAA is using the Julia programming language as a specification language for ACAS X.

Textbooks

I've re-typed and formatted several textbooks from Stanford course notes (the original authors are recognized within).

Probability for Computer Scientists

CS109: Probability for Computer Scientists, Stanford University, 2020

Machine Learning

CS229: Machine Learning, Stanford University, 2021 [LaTeX code]

Algorithms for Artificial Intelligence

CS221: Artificial Intelligence Principles and Techniques, Stanford University, 2021

Reinforcement Learning

CS234: Reinforcement Learning, Stanford University, 2021

Course Notes

Review: Unconstrained Optimization

CS361/AA222: Engineering Design Optimization, Stanford University, 2020

Review: Constrained Optimization

CS361/AA222: Engineering Design Optimization, Stanford University, 2020

Project: Constrained Optimization and Expression Optimization

CS361/AA222: Engineering Design Optimization, Stanford University, 2020

JuMP.jl and expression optimization for trinary star system motion using ExprOptimization.jl.Implementation: Learning Policies with External Memory

CS239/AA229: Advanced Topics in Sequential Decision Making, Stanford University, 2020

Reinforcement Learning Algorithms and Equations

Robert J. Moss, 2020

Markov Decision Process: Chain Rule

Robert J. Moss, 2020

Loss Functions in Machine Learning

Robert J. Moss, 2020

TeX.jl.Deriving the Quadratic Formula

Robert J. Moss, 2020

TeX.jl.